Picture this.

It’s Tuesday. 8:07 a.m.

Fourteen people on a leadership call. Everyone has “updates.” Nobody has a decision.

Ninety minutes later, you’ve “aligned”… and the same issue gets punted to next week.

Then someone says, “We’re rolling out AI.”

Licenses. Tools. Training. “Adoption.”

And somehow the organization is still slow, still noisy, still stuck in the same loops—just with better-looking notes.

That framing is the problem.

AI isn’t primarily a technology shift.

It’s a decision-making shift.

Well, you might be right that your company is “using AI.”

But in my experience, if AI hasn’t reduced meeting load, shortened decision cycles, and freed your best people for higher-level thinking… you’re not getting leverage.

You’re just paying the Decision Theater Tax with nicer fonts.

The diagnostic box: 5 signs you’re buying AI activity—not AI leverage

If you recognize 3+, your AI spend is at risk.

- AI is mostly used for capped-payoff work

Emails, summaries, decks, notes. Helpful… but capped. - Your leadership calendar looks identical

Same meeting load. Same “circle back” loops. Same “let’s sync” reflex. - Nothing measurable improved in a real workflow

No cycle time reduction. No rework reduction. No throughput gain. Just… activity. - AI outputs sound smart but aren’t grounded in your reality

Confident, generic answers that are quietly wrong. - Your culture still rewards “the person who knows”

People protect their lane, avoid looking wrong, and use AI to justify what they already wanted to do.

If that’s you, this isn’t a tooling problem.

It’s a culture-and-operating-model problem.

The real risk isn’t that AI replaces your people. It’s that it replaces your organization’s thinking discipline—and you don’t notice until the bad calls compound.

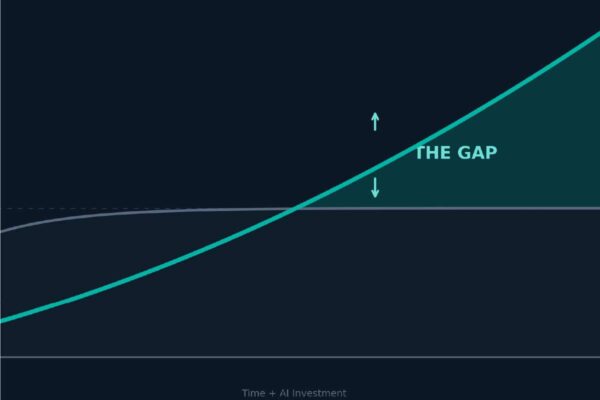

The two curves: where most companies aim AI (and miss)

Most organizations aim AI at the capped payoff curve:

- drafting internal emails

- summarizing meetings

- polishing decks

- formatting documents

- “productivity” that never hits the P&L

You get a little time back… and the org fills it with more noise. That’s not leverage. That’s faster spinning.

The missed opportunity is the uncapped payoff curve—the work where being a little better changes everything:

- decision velocity

- execution follow-through

- customer insight before it becomes churn

- margin protection (pricing, scope, rework)

- manager capacity (clarity + hard conversations earlier)

- handoffs that stop silently destroying throughput

AI isn’t a typing assistant.

AI is a leadership leverage engine—if you use it to change the system, not just the output.

Here’s what’s actually happening in companies right now

Things I’ve watched organizations do with AI this month that quietly destroyed value:

- Rolled out licenses company-wide. No change to how decisions get made. Adoption fizzled.

- Ran “prompt trainings.” Still drowning in meetings.

- Built an AI pilot. Didn’t ground it in company context. Output looked brilliant. Was wrong. (That’s the scary part.)

- Used AI to write faster. Never used AI to think better.

- Said “AI-first” while leadership still operates on tribal knowledge and gut feel.

The common thread: AI is being used to reduce effort, not improve judgment.

And judgment is where leverage lives.

The culture shift you need (and it’s not complicated)

Stop asking: “How do we use AI?”

Start asking: “Where are we slow, blind, or inconsistent in decisions?”

Because the real ROI of AI isn’t prettier work.

It’s:

- faster clarity

- better tradeoffs

- risk surfaced before it hits you

- fewer meetings to reach the same conclusion

- fewer bad calls repeated because “that’s how we’ve always done it”

AI is a mirror.

If your culture is “compliance disguised as agreement,” AI will amplify it. People will use AI to generate justification at scale.

If your culture is truth-seeking, AI becomes a force multiplier. It helps your team see what they’re missing—before the customer, the bank, or the P&L forces the lesson.

So what changes culturally?

1) Normalize challenge

AI is allowed to push back. Make it standard language:

- “What would have to be true for this to work?”

- “What’s the strongest argument against this?”

- “What are we assuming?”

- “What are we ignoring because it’s inconvenient?”

2) Reward hypothesis thinking

This is the sentence structure I want inside your leadership team:

- “Here’s what we know.”

- “Here’s what we’re assuming.”

- “Here’s what we’ll test.”

- “Here’s what we’ll do if we’re wrong.”

Most companies avoid this because it feels slow.

But you’re already paying for ambiguity—you’re just paying in meetings, rework, and escalation.

3) Make decisions visible

If it isn’t written down, it isn’t real. It’s just vibes and memory.

A single page beats a dozen meetings.

4) Kill meeting-as-decision-theater

Pre-work gets done with AI. Meetings are for:

- tradeoffs

- commitment

- resourcing

- risk acceptance

If your leadership meetings are still “updates,” you don’t have an AI strategy. You have expensive noise.

Don’t let AI quietly atrophy your leadership muscle

Here’s the part nobody wants to admit out loud:

A lot of teams are using AI the way people use a shortcut when they’re tired— not to get smarter… to avoid thinking.

And that’s how you end up with an organization that can produce more output with less judgment.

AI makes this tempting because it’s so frictionless. It will happily give you an answer, even when it’s guessing. It will sound confident, even when it’s wrong. And if your culture already rewards fast answers and confident opinions, AI becomes a turbocharger for that behavior.

So you need a rule of the road:

Use AI to remove friction for “information work.”

Drafting, summarizing, compiling, first-pass analysis, grunt work. (Capped payoff curve.)

But…

Use AI to add friction for “transformation work.”

The work where judgment, tradeoffs, and leadership capability actually get built. (Uncapped payoff curve.)

Because long-term intelligence—individual and organizational—doesn’t come from convenience.

It comes from resistance.

That’s where the metaphor fits:

AI should be a spotter, not a wheelchair.

A spotter doesn’t lift the weight for you.

It helps you lift better—and keeps you from getting crushed.

So instead of asking AI, “What’s the answer?” you ask:

- “What am I missing?”

- “What would have to be true for this to work?”

- “What are the top risks and second-order effects?”

- “If this fails in 90 days, why?”

- “What decision am I avoiding by asking for more analysis?”

That’s how AI improves thinking instead of replacing it.

And that’s how it becomes leverage—not novelty.

Three mid-market examples (this is where leverage actually shows up)

Quoting: the bottleneck is rarely “writing”

In operational businesses, quoting often slows down because inputs are messy and tribal:

- scope details live in someone’s head

- pricing rules are “we’ll know it when we see it”

- clarifying questions happen in five scattered emails

- approvals are unclear

Using AI to draft follow-ups is fine (capped payoff).

Leverage comes when AI is used to standardize the decision process:

- a consistent scoping checklist

- a “missing inputs” flag before estimating starts

- a quote package that forces the right assumptions to be explicit

When companies do this well, it’s common to see quote turnaround move from “weeks and rework” to “days and cleaner first passes.” That’s not magic. That’s a better system.

Service: stop reacting; start detecting patterns

Summarizing tickets is nice.

But the win is when AI helps you see:

- recurring failure points

- root causes by customer segment

- handoff breakdowns by team

- early warning signals before escalation

AI doesn’t reduce labor. It reduces avoidable pain.

Leadership: fewer meetings, better decisions, more follow-through

If AI doesn’t change:

- what you decide

- how you decide

- how decisions stick

…then it’s not leverage.

The best shift I see is simple:

AI does the pre-work. Humans do the tradeoffs.

Why mid-market has an unfair advantage (if you use it)

Mid-market companies can test faster and cheaper than enterprise:

- shorter feedback loops

- leaders closer to the work

- fewer layers between “idea” and “execution”

- the ability to course-correct quickly without a governance parade

Big companies have resources. They also have drag.

Mid-market has speed.

But here’s the urgency: your competitors don’t need a full transformation program.

They just need to get 10–20 percent faster at the right workflows—quoting, collections, scheduling, service resolution, onboarding, forecasting.

That speed becomes responsiveness.

Responsiveness becomes customer confidence.

Customer confidence becomes pricing power.

And pricing power is oxygen.

The 90-day AI Leverage Sprint (do this, not “AI adoption”)

If you want AI leverage—not novelty—run this like an operating sprint.

1) Pick 3 workflows where leverage matters

Choose where cycle time, quality, or rework hits revenue or margin:

- quote-to-cash

- service resolution

- scheduling/dispatch

- collections follow-up

- onboarding to productivity

- forecasting inputs / S&OP

If it doesn’t show up in throughput, customer experience, or margin—don’t lead with it.

2) Assign one owner per workflow

Not a committee. Not “IT.” Not “innovation.”

A named leader with authority to change the process and remove blockers.

3) Split work into Curve 1 vs Curve 2

This is the unlock.

Curve 1 (capped payoff): drafting, research, first-pass analysis, grunt work.

Use AI aggressively.

Curve 2 (uncapped payoff): judgment, tradeoffs, accountability, decision rights.

Use AI as a spotter—challenge assumptions, surface risks, compare options.

If AI touches Curve 1 but never touches Curve 2, you’ll feel “busy” and still not get leverage.

4) Install two non-negotiable artifacts

Decision One-Pager (required)

- the decision

- the owner (who decides)

- inputs considered

- assumptions (what must be true)

- risks / second-order effects

- what would change our mind

- next review date

Meeting Compression Protocol (required)

- AI-generated pre-work: options, risks, unresolved questions

- meeting agenda = tradeoffs + commitments

- meeting ends with: decision, owner, deadline, checkpoint

5) Ground AI in your context

If you don’t feed AI your realities, it will fill the gaps with confident nonsense.

Ground it with:

- process maps

- KPIs

- customer segments

- pricing rules

- service data

- quality standards

- definitions of “good”

6) Build verification into the workflow

Define what must be checked, who signs off, and what gets updated when AI is wrong.

That’s not bureaucracy. That’s how you build trust at scale.

CEO scoreboard: what should be different in 30–90 days?

If AI is working, you should see evidence like:

- fewer leadership meeting hours

- faster decision cycle time

- fewer dropped handoffs

- quicker onboarding to productivity

- higher throughput per function

- fewer repeat issues / less rework

- clearer accountability (owners + deadlines that stick)

If you can’t point to evidence, you don’t have an AI strategy.

You have AI activity.

The close

AI is not here to make your people faster.

AI is here to make your organization smarter at deciding.

Well, you might be right that you’ve “implemented AI.”

But in my experience, until your culture shows proof—less meeting load, shorter cycles, cleaner handoffs—you haven’t implemented anything.

You’ve just bought software.

Where do you want leverage most right now—decisions, meetings, execution follow-through, or manager capacity?